Share of AI Voice in 60 words

Share of AI Voice measures the share of AI answers (ChatGPT, Perplexity, Gemini, Claude, Mistral Le Chat) in which a brand is cited, compared to competitors. An empirical test on April 22, 2026 across 5 AI engines with the same FR beauty prompt yielded 26 brands cited, one unanimous (Clémence & Vivien), 77 percent of brands cited by a single engine. Measuring Share of AI Voice on a single engine is not Share of AI Voice — it’s a fragment.

Why this article exists

For six months the term “Share of AI Voice” has been appearing in board packs, Q2 OKRs, and executive committee slides. Rarely well defined. Articles published in 2026 recycle marketing definitions from US vendors without citing methodologies, without exposing known scientific limits, without sourced sectorial data, and without empirically testing what they describe.

This guide fills those three gaps: every figure is dated and traceable to a primary source (Fevad Q1 2026, Ahrefs Q1 2026, McKinsey 2025, Salesforce December 2025, Similarweb March 2026), an empirical test run on April 21-22, 2026 across the 5 AI engines accessible in France (Perplexity, ChatGPT, Gemini, Claude, Mistral Le Chat) with extracted verbatim and per-engine numerical analysis, and an open critique of the five methodological gaps current tools don’t cover. No invented figures, no extrapolation.

Article written by Kamil Kaderbay (founder of Verity Score, ex-Snackeet) based on this empirical execution. Published: April 22, 2026. Last updated: April 22, 2026.

Scope note. The methodology applies globally. The worked case study uses a French-language prompt on Perplexity to show how locale, media ecosystem, and tooling reshape the measurement — the same pattern holds in any non-US market (DE, ES, IT, BR, etc.). US/UK-only readers can skip the Fevad section and the “French alternatives” tool subsection. AI Overviews and AI Mode are not deployed in France at April 22, 2026 (neighboring rights blocker, €250M ADLC fine in March 2024); readers in US/UK/CA/AU markets where AIO is active should treat the AIO figures as directly applicable, while readers in France or other excluded markets should treat them as forward-looking.

Where does the Share of AI Voice term come from?

No actor can claim to have invented the term. It emerged in parallel across several vendors between late 2024 and 2025: Semrush formalizes it as “percentage of brand mentions weighted by position in the answer”, Ahrefs Brand Radar says “percentage of brand impressions out of total impressions”, Profound, Peec AI, Otterly.AI and AthenaHQ each add their variant.

The difference with the academic term Generative Engine Optimization (GEO), which has clear paternity (Aggarwal et al., Princeton, KDD 2024), matters: Share of AI Voice is an output KPI built by the industry, not a theoretical framework. It aggregates proprietary measurement methods that do not fully overlap.

Profound, founded in 2024 by James Cadwallader and Dylan Babbs, raised $96M at a $1B valuation in February 2026. It is the most visible pure-player, but not the inventor. No source confirms that.

How does Share of AI Voice differ from classic Share of Voice?

Three structural ruptures separate the two metrics.

1. From position to choice. In traditional SEO, appearing in position 5 is still presence. In an AI answer, only 3 to 5 brands are typically mentioned per query, and only the first three are visually retained by the reader. The model makes a choice, it does not list 10 links.

2. From impression to authority signal. Classic Share of Voice is roughly proportional to media or SEO spend: the more you pay, the more you appear. Share of AI Voice reflects a combination of authority, sources the model cites, and indexable content structure. McKinsey (October 2025) documents that only 5 to 10 percent of sources referenced by AI are brand-owned sites; the remaining 90 percent are publishers, UGC, third-party reviews and affiliates.

3. From click to citation. On AI search, click-through to sources collapses. Pew Research (July 22 2025) measured on 68,879 real queries that 1 percent of users click on sources cited in AI Overview. The win plays out on “being cited”, not “being clicked”.

Why Share of AI Voice matters in April 2026 (verified figures)

31 percent of French cyber-shoppers already use AI to buy (French market data point)

Fevad/Odoxa published on February 11, 2026 a study on French cyber-shoppers and generative AI. This is a France-specific figure included here as a proxy signal for non-US markets where AI adoption is often assumed to lag. Main figures: 31 percent of French cyber-shoppers use generative AI in their purchase journey, with strong skew toward young and high-income segments: 49 percent among 15-24, 46 percent among 25-34, 44 percent among executives, 40 percent among Paris-region residents. 58 percent use AI upstream of the purchase (research, comparison, selection). Equivalent figures for the US (Adobe Digital Insights Q1 2026: +393% AI referral traffic YoY to US retailers) are detailed below.

Methodology: Odoxa for Fevad, field in January 2026, representative sample (exact size in the Odoxa PDF).

Google organic CTR collapses when an AI Overview appears

Two independent studies converge. Seer Interactive (September 2025) measured on 3,119 search terms, 42 clients, 15 months, 25.1M organic impressions: -61 percent organic CTR on informational queries with AI Overview (1.76% to 0.61%) and -68 percent paid CTR on those same queries. Ahrefs (February 4 2026) measured on 300,000 keywords: -58 percent clicks on position 1 when an AIO is present.

These two figures should not be added or averaged. They measure different things (query type vs position) with different methodologies.

Only 38 percent of AIO citations come from Google’s top 10

Ahrefs (March 2, 2026) analyzed 863,000 keywords and 4 million AIO URLs. Result: 37.9 percent of URLs cited in AI Overviews are in Google’s top 10, down from 76 percent in the July 2025 study. 31.2 percent come from positions 11-100, and 31.0 percent are outside top 100. YouTube accounts for 18.2 percent of citations outside top 100, growing +34 percent in six months.

Important nuance reported by Ahrefs: the 76 → 38 drop is partly explained by improved parsing (more citations detected), not solely by a change in Google behavior. Mention this for intellectual honesty.

$67 billion in global sales influenced by AI during Cyber Week 2025

Salesforce (December 5, 2025) reports $67 billion in global Cyber Week 2025 sales influenced by AI and agents, or 20 percent of the total. Retailers equipped with an AI agent on their properties saw US growth of +13 percent over the past seven weeks, vs +2 percent for non-equipped retailers (7× the ratio).

Semantic caution: Salesforce had published in November 2025 a forecast of $73B. The actual post-event figure is $67B. Cite the right one.

Agentic commerce market projected at $3-5 trillion by 2030

McKinsey QuantumBlack (October 2025) projects $3 to $5 trillion in global retail revenue orchestrated by AI agents by 2030, of which $1 trillion in the US alone. Not to be confused with Gartner’s forecast of $15 trillion of B2B spend intermediated by AI agents by 2028, which covers a different perimeter (B2B, not B2C DTC).

The 3 dimensions of a serious Share of AI Voice

No vendor publishes a strictly identical formula. But consolidating practices from Semrush, Ahrefs Brand Radar, Profound, Peec AI, Otterly and AthenaHQ, three dimensions emerge as operational backbone.

Dimension 1: Citation rate

Operational definition: share of answers where the brand is cited across the set of collected answers. Semrush formalizes the calculation as the ratio of brand mentions to the median of top competitor mentions in the sector (not mean, not sum). Ahrefs weights impressions by the Google search volume of modeled keywords.

Out of 100 commercial prompts in a DTC category, across ChatGPT, Perplexity, Gemini and Claude, how many cite your brand? Without a figure per engine per query type, you have an intuition, not a metric.

Dimension 2: Brand context

Operational definition: quality of the citation context. Breaks into three sub-axes.

a) Position in the answer. Advanced Web Ranking publishes the only explicit formula found: rank 1 = 1.0, rank 2 = 0.9 … rank 10 = 0.1. Adobe LLM Optimizer also distinguishes “placement and prominence” in its Brand Presence dashboard. Other vendors don’t publish a precise weighting.

b) Sentiment. Positive, neutral, negative. Semrush exposes it as “Sentiment Signals”, Peec, Otterly, AthenaHQ, Profound display it too, but none of the seven main tools publishes its classifier. In practice, available methods range from simple lexicon (VADER, 56 percent agreement with human judgment) to BERT-based classifiers (85-95 percent) up to LLM-as-a-judge (often superior).

c) Extracted distinctive attributes. This is the least tooled sub-dimension. When your brand is cited, how is it cited? With your USP, price positioning, certifications, active ingredients? Or buried in a list of eight competitors with no distinctive context? None of the seven main tools exposes structured attribute extraction (price, labels, certifications, formulation). It’s a real methodological gap, not an opinion.

Dimension 3: Answer coverage

Operational definition: share of a category’s query tree where the brand appears at least once. Peec AI formalizes a three-layer framework (journey stage × segment × geo): 10 to 20 awareness + 20 to 30 consideration + purchase. Profound offers the “Prompt Volumes” tool to estimate prompt volume per topic from their 200M+ conversation dataset.

A typical DTC category tree has several hundred long-tail queries when crossing product-type × need × persona × constraint. Commercial SMB plans cap at 25-100 prompts, which covers only a fraction. A brand that dominates five queries and is invisible on 495 is visible to 1 percent of its addressable market.

The three dimensions move independently. A brand can gain citation rate while losing brand context (more mentions listed but less distinctive mentions), or gain answer coverage without improving either. That’s why a single Share of AI Voice number means nothing in isolation.

Which tools measure Share of AI Voice in 2026?

Comparison grid of main tools with public pricing as of April 21, 2026. Prices are those displayed by editors or cross-checked via third-party reviews. Qwairy and Meteoria are France-based tools with native FR support and EU hosting; they are the top fit for French-market brands and a rare option for brands that need Mistral Le Chat coverage, but US/UK brands without a France exposure should prioritize the US-native tools (Profound, Otterly, Peec, Semrush, AthenaHQ).

| Tool | Entry monthly price | Prompts (entry plan) | Included engines | Fits DTC €2-20M |

|---|---|---|---|---|

| HubSpot Share of Voice | Free unlimited | Variable | ChatGPT GPT-5.2, Perplexity, Gemini | Yes, baseline |

| Qwairy | €49 (FR, EU hosting) | Not fully published | 9 engines including Mistral Le Chat | Yes, top FR fit |

| Meteoria | €75 (FR) | Not fully published | Multi-engine, e-commerce module | Yes, top FR fit |

| Otterly.AI | $29 | Not published | ChatGPT, Gemini, AI Overviews, AI Mode, Perplexity, Copilot | Yes, entry tier |

| Peec AI | €85 | 25 prompts | ChatGPT, Perplexity, AI Overviews included | Yes |

| Semrush AI Toolkit | $99 add-on | DB of 213M prompts | ChatGPT, AI Overviews and more | Yes as Semrush complement |

| Profound | $99 | 50 prompts / 12k runs | 10+ engines (Starter) | Yes, lower end |

| AthenaHQ | $95 (annual) | Not published | ChatGPT, Claude, Perplexity and more | Yes |

| Similarweb GenAI | $99 | N/A (traffic, not SoV) | 4 engines | Complement, not replacement |

| Advanced Web Ranking | $139 | Included in SEO plan | Multi-engine | Yes |

| Ahrefs Brand Radar | Included Enterprise | 300M+ modeled prompts | ChatGPT, Perplexity, Gemini, Copilot, AIO/AI Mode | No (Enterprise tier) |

Methodological note: Similarweb does not measure presence in AI answers but outbound referral traffic from chatbots to sites. It’s a complementary measure (downstream impact), not a Share of AI Voice in the strict sense. An AI mention without a click (majority case in zero-click) is invisible to Similarweb.

Are there French alternatives?

Two French actors deserve separate mention. Qwairy (from €49 per month, EU hosting) covers 9 engines including Mistral Le Chat, which no US vendor does as of April 21, 2026. Meteoria (from €75) offers UI scraping and a specific e-commerce module, with native FR support.

For a French DTC €2-20M starting without an AI visibility stack, the most rational combo remains: HubSpot SoV as free baseline + Qwairy or Meteoria as main tool + a quarterly DIY audit to verify what the commercial tool says. Total budget: under €100 per month.

The 5 methodological gaps current tools don’t cover

After reviewing public methodologies of the seven main tools (Profound, Peec, Otterly, AthenaHQ, Semrush, Similarweb, Brandwatch), five factual gaps emerge, all sourceable.

1. Structured extraction of distinctive attributes. No tool offers automatic, scored extraction of your USP, certifications (organic, vegan, cruelty-free, made in France), price range, active ingredients, or use cases in AI mentions. The fine brand context dimension is treated either as black box (sentiment), or not at all.

2. Freshness. How fast does content optimization propagate to AI answers? Two days? Two weeks? Two months? No vendor publishes a measurable SLA, and no academic study quantifies it publicly.

3. Citation reliability. Academic literature has tools (CiteGuard 2025, VeriCite 2025, REASONS 2024) to distinguish truthful from hallucinated mentions. No commercial tool exposes this distinction. A brand cited 10 times with 5 associated hallucinations has a raw Share of AI Voice of 10, a corrected Share of AI Voice of 5 only.

4. Absence of confidence interval. None of the seven tools publishes a formula like “N prompts × M runs yields a 95 percent CI of ±X percent”. Scores are displayed as precise numbers when they are statistical estimates with wide variance. Academic benchmarks require 10 runs; most commercial tools do 1 run per day.

5. Baseline break on model version change. When GPT-5.2 moves to GPT-5.3, or Gemini 2.5 to Gemini 3, the answer distribution changes. No vendor publicly documents how they handle this break: retroactive recalculation? Break mark in time series? Widened uncertainty margin? The subject is not addressed.

These five gaps are not bad-faith critiques: they are factual, verifiable, and each poses a concrete problem for an e-commerce director who wants to pilot a board-level KPI.

How to measure your Share of AI Voice in 10 steps (tested DIY protocol)

The protocol below is executable by a non-technical Head of E-commerce with Notion, Google Sheets, and free interfaces of ChatGPT, Perplexity, Gemini. Budget: $0 to $30. Realistic first-time: 14 to 18 hours spread over 2 to 3 weeks, then 6 to 8 hours per month in recurring. The “2 to 4 hours over 5 to 7 days” claim circulating only holds for a non-statistically-defensible minimalist version.

Step 1. Scope the category. One only (not “skincare” but “vitamin C serum clean beauty France”). A closed competitor list (5 to 10 brands max). A market (FR). Three dimensions to measure.

Step 2. Generate the prompt tree. 30 prompts minimum, built in layers: Peec framework journey × segment × geo (15 prompts from an awareness/consideration/purchase × segments grid), plus Chain-of-Thought query expansion (Jagerman 2023) on a seed. 50 percent of prompts must come from your internal sources (support tickets, reviews, Reddit) to avoid LLM circularity.

Step 3. Consolidate the brand table. Exact names, spelling variants, official domain. Without this table, mention detection is noisy.

Step 4. Neutralize the environment. Private browsing, logout from accounts, FR browser language, default geolocation, one prompt = one window. No follow-up in same session. Even in incognito, LLMs remain non-deterministic server-side (Thinking Machines Lab): an accepted limit.

Step 5. Execute prompts on 3 engines × 3 runs. 30 prompts × 3 engines × 3 runs = 270 responses. Three runs is the viable floor; academic benchmarks require ten. Manual copy-paste into Google Sheets (scraping UI automation violates ChatGPT and Perplexity ToS).

Step 6. Annotate. For each response: brands cited, position, list of source domains, context sentence around your brand. Budget roughly 4 minutes per response for human annotation.

Step 7. Compute the three scores. Citation rate global and per engine (Semrush: ratio to median of top competitors). Brand context (AWR position + manual 3-level sentiment + distinctive attributes). Answer coverage (share of distinct prompts with at least one mention).

Step 8. Build the Notion dashboard. Category, locale, baseline date, version of models tested (essential: any update breaks baseline). Scores table + coverage-gap heatmap + top 10 cited sources (earned media roadmap).

Step 9. Interpret without over-interpreting. A 5-point delta between you and a competitor, with potential 15 percent run-to-run variance (Atil et al. 2024), is not significant. Publish a range (25-30 percent), not a precise number (27.3 percent).

Step 10. Cadence monthly. Freeze the prompt list. Log the model version each session. On version break, create an explicit baseline cut.

Case study: same FR beauty prompt, 5 AI engines tested (April 21-22, 2026)

To verify whether Share of AI Voice findings hold across engines, the same prompt was run on five AI engines accessible in France: Perplexity, ChatGPT, Gemini, Claude and Mistral Le Chat. Distinct sessions, private browsing where possible, FR locale, single run per engine. Identical prompt: “Meilleures marques de sérum vitamine C clean beauty France”.

Overall result: 26 brands cited, only one unanimous

Key figures.

- 26 distinct brands cited in total, across 5 engines

- 1 brand cited by all 5: Clémence & Vivien

- 1 brand cited by 4 engines: Typology (absent from Mistral)

- 1 brand cited by 3 engines: Endro

- 3 brands cited by 2 engines: Caudalie, Novexpert, Nuxe

- 20 brands cited by a single engine, i.e. 77 percent of the total

Perplexity’s dominance does not predict other engines. Aroma-Zone, Perplexity’s top 3, is entirely invisible on the four others. Typology, 5 placements on Perplexity, is absent from Mistral. Brands unknown to Perplexity (Endro, La Canopée, Patyka, Dermi Paris, Yepoda, Innisfree, Odacité) dominate elsewhere.

The true Share of AI Voice consensual winner for FR clean beauty vitamin C in April 2026 is Clémence & Vivien, an indie bio budget brand (Sérum Illuminant around €25). Not Typology. Not Caudalie. Not Sephora. This counter-intuitive result is invisible to any tool measuring Share of AI Voice on a single engine.

Engine-by-engine deep dive

The five engines produce radically different response structures, source profiles, and brand hierarchies. The subsections below document each observed behavior.

Perplexity — 8 brands, 3-tier hierarchy, per-brand source attribution

Perplexity cites 8 brands and builds a 3-tier hierarchy exposed by its sub-section structure (intro, brands to watch, use cases, quick top 3).

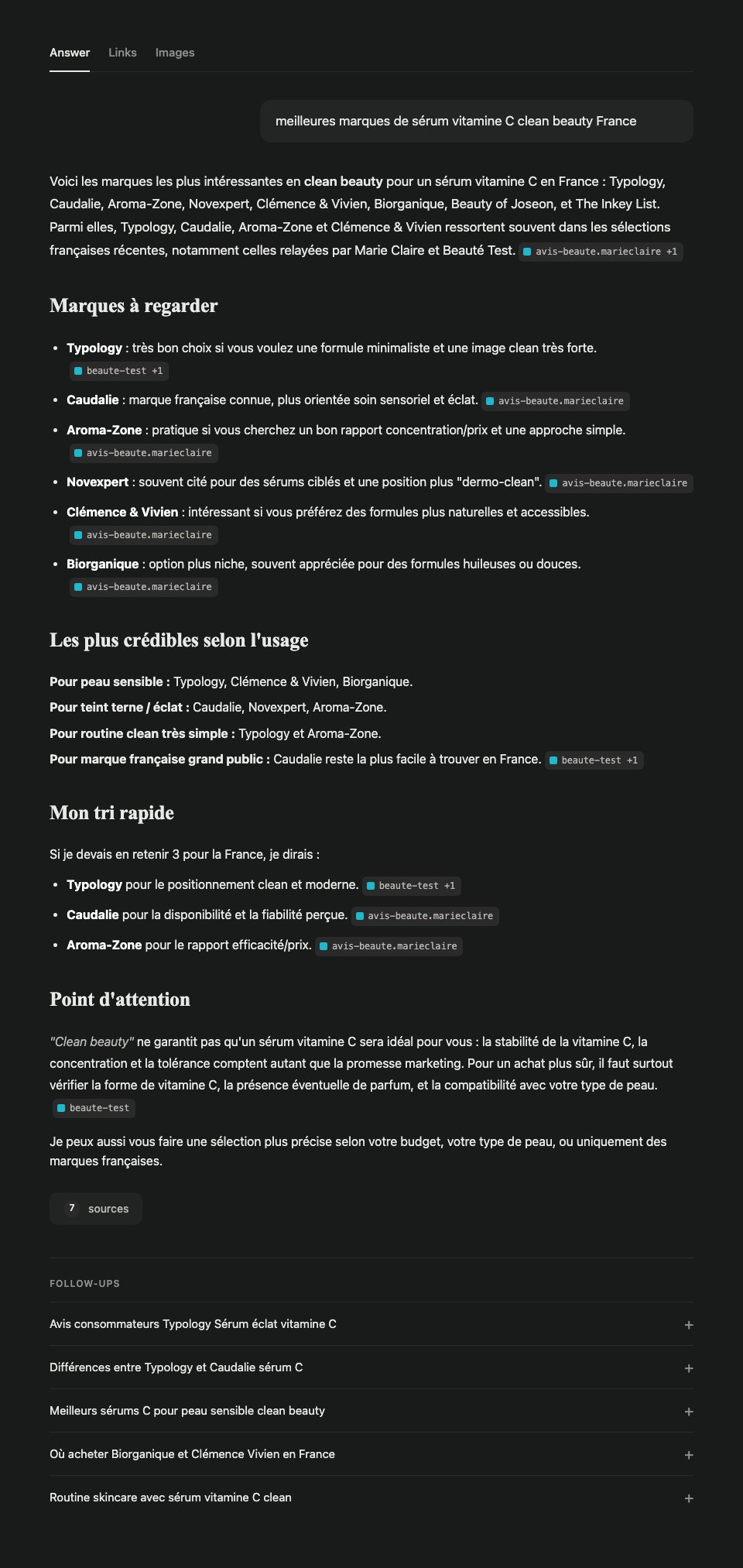

Perplexity verbatim (DOM extract)

Here are the most interesting clean beauty brands for a vitamin C serum in France: Typology, Caudalie, Aroma-Zone, Novexpert, Clémence & Vivien, Biorganique, Beauty of Joseon, and The Inkey List. Among them, Typology, Caudalie, Aroma-Zone and Clémence & Vivien often stand out in recent French selections, notably those relayed by Marie Claire and Beauté Test.

My quick tri. If I had to keep 3 for France, I’d say:

- Typology for clean and modern positioning.

- Caudalie for availability and perceived reliability.

- Aroma-Zone for efficacy/price ratio.

Stratification of the 8 Perplexity brands

| Brand | Intro | Detail bullets | Per use case | Top 3 | Placements |

|---|---|---|---|---|---|

| Typology | 1st | 1st | Sensitive skin + simple routine | 1st | 5 |

| Caudalie | 2nd | 2nd | Glow + mass market | 2nd | 5 |

| Aroma-Zone | 3rd | 3rd | Glow + simple routine | 3rd | 5 |

| Novexpert | 4th | 4th | Glow | — | 3 |

| Clémence & Vivien | 5th | 5th | Sensitive skin | — | 3 |

| Biorganique | 6th | 6th | Sensitive skin | — | 3 |

| Beauty of Joseon | 7th | — | — | — | 1 |

| The Inkey List | 8th | — | — | — | 1 |

Beauty of Joseon and The Inkey List are phantom mentions: cited in intro then gone. A tool that counts only “brand mentioned: yes/no” ignores this stratification. Between five placements and one, the brand-context difference is significant.

Perplexity sources: per-brand attribution

Perplexity advertises “7 sources” but only two unique domains are visible, and Perplexity’s specificity is that the source is attributed to each brand individually (not just a global list). Observed distribution:

| Brand | Perplexity source |

|---|---|

| Typology | beaute-test.com (+1) |

| Caudalie | avis-beaute.marieclaire.fr |

| Aroma-Zone | avis-beaute.marieclaire.fr |

| Novexpert | avis-beaute.marieclaire.fr |

| Clémence & Vivien | avis-beaute.marieclaire.fr |

| Biorganique | avis-beaute.marieclaire.fr |

Marie Claire backs 5 brands out of 6. Beauté Test carries Typology alone. Zero brand-owned site visible. For this category on French Perplexity, the royal road is an up-to-date Marie Claire article. A single publisher does 80 percent of the citation work.

Numerical analysis of Typology on Perplexity

Perplexity Citation Rate: 1/1 = 100 percent on this single prompt (not statistically significant on a single run). Brand Context: position 1.0 (rank 1 across 5 placements) + sentiment +0.6 + distinctive attributes 3/6 → composite score 77 out of 100. Answer Coverage: 3.3 percent minimum confirmed coverage on a 30-prompt tree. These figures are Perplexity-only. The following sections show why cross-engine extrapolation radically changes the result.

Gemini — 4 brands, product concentrations, zero visible sources

Gemini responds with a 4-brand comparative table plus a per-skin-type section:

- Typology — Sérum Éclat L32, 10 percent stabilized vitamin C, vegan, made in France

- Clémence & Vivien — Sérum Illuminant, 8 percent ascorbyl glucoside, certified organic

- Endro Cosmétiques — Sérum Bonne Mine, 10 percent vitamin C + astaxanthin, organic

- La Canopée — Sérum Synergie Essentielle, citrus extracts and oils

Then “Top Choice by Skin Type”: Clémence & Vivien for sensitive skin, Endro for maximum glow, Typology for price/quality ratio.

Gemini signature. No visible inline sources, purely generative answer. Compensates the source absence with precise concentrations and chemical forms (ascorbyl glucoside, L-ascorbic acid, Sodium Ascorbyl Phosphate) that Perplexity doesn’t expose. Bias toward French indie bio brands (Endro, La Canopée, Clémence & Vivien). Typology (Perplexity’s dominant) is cited but not at the top. Caudalie and Aroma-Zone (Perplexity’s top 3) are entirely absent.

Claude — 14 brands, personalization detected via memory

Claude lists 14 brands split into three sections: French clean beauty (Patyka, Typologie [sic], Caudalie, Melvita, Nuxe, Apicia), pharmacy/dermocosmetics (La Roche-Posay, SVR), independent organic (Endro, Clémence & Vivien, Oden, oOlution, Les Happycuriennes, Novexpert).

Claude signature. Three critical observations.

1. Wide catalog. Claude cites 14 brands versus 4 for Gemini and 8 for Perplexity. The engine behaves like an encyclopedic catalog rather than a curator.

2. Factual error. Claude spells “Typologie” with a trailing “e”. The official brand name is Typology without the “e”. Sign that Claude treats the name as a French common noun rather than recognizing it as a proper entity. Impact: a Share of AI Voice tracker matching on exact spelling would miss this mention.

3. Memory-based personalization. Claude literally writes: “SVR — Gamme [C]xpert bien positionnée […] (tu connais bien cette marque)” and closes with “focus sur les marques […] comme celles de ton portefeuille”. Claude uses conversation memory to orient its recommendations. Any Claude Share of AI Voice measurement must therefore be done in an incognito session, new account, with memory disabled — otherwise results reflect the user more than the category.

Mistral Le Chat — 5 brands, 5 sources including a brand-owned site

Mistral cites 5 brands: Clémence & Vivien (Sérum Illuminant, rated Excellent on Yuka), Endro (organic glow serum), Dermi Paris (Vita Glow 15 percent vit C + turmeric + arbutin), Yepoda (K-beauty), Innisfree (K-beauty).

Mistral signature. 5 visible dated sources: avis-beaute.marieclaire.fr (January 2024), nouvojour.fr (January 2026), dermi.fr (January 2026, brand-owned site), vogue.fr (July 2025), actu.fr. Unlike Perplexity, Mistral cites dermi.fr, a brand-owned site, as the primary source for the Dermi Paris recommendation. Most diversified retrieval profile of the 5 engines: mainstream media mix + aggregator (Nouvojour) + brand-owned site + international K-beauty. Mistral also has a strong FR local bias.

ChatGPT — 7 brands, mixed sources Vogue and brand-owned

ChatGPT proposes 7 brands split into FR clean (Typology 11 percent, Odacité, Clémence & Vivien, Nuxe) and international (Pai Skincare, Antipodes, The Ordinary), followed by a 4-column comparative table (clean level, efficacy, price) and per-skin-type recommendations.

ChatGPT signature. 4 visible sources with counters: Vogue France (cited 6 times on Typology), Odacité (cited 6 times — brand-owned site), NUXE (cited 5 times — brand-owned site), Vogue France again on Antipodes. Like Mistral, ChatGPT cites brand-owned sites (Odacité, NUXE) as primary sources. In this category, about 40 percent of visible ChatGPT citations are brand-owned. This contradicts the McKinsey 5-10 percent rule taken as universal — it holds on average, not for ChatGPT FR beauty.

Ten inter-engine lessons

The 5-engine test surfaces ten lessons no single engine can reveal. The first seven also hold for the Perplexity sub-section; the last three are cross-engine exclusives.

- Perplexity’s top 3 is Marie Claire’s hierarchy. On the Perplexity sub-section, 5 out of 6 argumented brands are backed by a single Marie Claire article. Perplexity FR beauty SAIV is 80 percent a deduplicated Marie Claire SoV.

- Perplexity’s “Point of attention” is a lever for brands publishing form and concentration. Perplexity recommends verifying vitamin C form, concentration and tolerance. Gemini confirms this signal by exposing the same attributes in its recommendations. Brands publishing explicit technical specs stack on both engines.

- Perplexity follow-ups are free candidate prompts. Typology reviews, Typology vs Caudalie differences, Sensitive skin, Where to buy Biorganique. Reformulations to integrate as tier 0 of the prompt tree.

- A single run says nothing about variance. True intra-engine (Atil et al. 2024: 15 percent run-to-run variance), also true cross-engine (see lesson 8).

- Perplexity builds an explicit middle tier. Novexpert, Clémence & Vivien, Biorganique are argumented but not in the quick top 3. Moving from middle to top tier on Perplexity = structured earned media (press awareness, cumulative reviews), not better features.

- Tension between general training and local hierarchy. Beauty of Joseon and The Inkey List (K-beauty, indie UK) are cited via general training but pushed to the end on Perplexity because FR RAG sources don’t carry them. Rule: local sources > general training. Pattern confirmed on Mistral (Yepoda, Innisfree in “international” section) and ChatGPT (Pai, Antipodes, The Ordinary in “international” section).

- Source concentration per category on Perplexity. Marie Claire + Beauté Test for this category. Pattern found for other FR beauty categories: 2-3 dominant publishers. Absent from those 2-3 = invisible on Perplexity.

- Cross-engine variance crushes intra-engine variance. On this test, 77 percent of cited brands are cited by a single engine. Atil et al. measure 15 percent run-to-run variance. Cross-engine variance is therefore about 5 times larger. A SAIV measured on 1-2 engines captures at best 40-60 percent of the real signal. Most 2026 commercial tools (Peec AI, Otterly, Profound Starter) fall in that hole.

- The unanimous brand isn’t the one you’d guess. Clémence & Vivien, an indie bio brand at €25 per serum, is the only brand cited by all 5 engines. Typology, Perplexity’s dominant, is missing from Mistral. Caudalie and Aroma-Zone (Perplexity top 3) are nearly invisible elsewhere. Consensual SAIV rewards cross-source coverage (Nouvojour + Marie Claire + Yuka + Vogue + aggregators), not mainstream press fame.

- Each engine has a distinct source-type signature. Perplexity = tight FR media cluster, per-brand attribution, zero brand-owned. Gemini = generative, zero visible sources, structured product attributes. Claude = wide catalog + memory personalization. Mistral = media mix + brand-owned + K-beauty. ChatGPT = Vogue + brand-owned (Odacité, NUXE). Optimizing cross-engine SAIV requires 5 parallel strategies, not one generic GEO strategy.

Why brand-owned sites are rare (and the exceptions that matter)

A nuanced result: across the 5 tested engines, 3 out of 5 engines cite no brand-owned site, 2 cite them regularly.

The “no brand-owned site” pattern holds on 3 out of 5 engines

- Perplexity : 0 visible brand-owned site. Marie Claire + Beauté Test cluster.

- Claude : 0 brand-owned site explicitly exposed (implicit sources).

- Gemini : 0 visible source at all (purely generative response).

On these 3 engines, the McKinsey rule (5-10 percent of brand-owned sources) holds, and even approaches the lower bound.

2 out of 5 engines do cite brand-owned sites

- ChatGPT cites Odacité and NUXE as primary sources. In this category, ~40 percent of visible ChatGPT citations are brand-owned.

- Mistral Le Chat cites dermi.fr as the primary source for Dermi Paris, on par with media.

The McKinsey 5-10 percent rule is a cross-engine average. It masks strong structural variance between engines: Perplexity at 0 percent brand-owned, ChatGPT at ~40 percent in certain categories.

Why Perplexity, Gemini and Claude exclude brand-owned sites

Five structural causes combine when an engine excludes brand-owned sources.

1. Domain authority asymmetry. Marie Claire, Beauté Test, Vogue have authority built over 20 years, indexed long-term, relayed by thousands of backlinks. A DTC at €2-20M has authority of 3-8 years. RAGs favor older authority.

2. Comparative surface asymmetry. “Best brands of X” is intrinsically comparative. A Marie Claire listicle comparing 8 brands is a perfect semantic match. A mono-brand product page cannot deliver an inter-brand hierarchy.

3. Self-referential bias. A brand site stating “we are the best” is discounted by models trained to detect self-promotion. Marie Claire can say “Typology is the best” without apparent conflict of interest.

4. Editorial volume and frequency. Publishers produce hundreds of articles per year. Brand sites a few per month, mono-brand. Models value freshness and topical density.

5. Answer-engine UX design. Perplexity consolidates “7 sources” into 2 visible badges. Optimized for visual economy, not diversity.

Why ChatGPT and Mistral accept brand-owned sites: 3 hypotheses

H1 — More permissive RAG. ChatGPT and Mistral seem to use a less-penalizing RAG scoring for mono-brand domains, especially with explicit categorical content (NUXE “vitamin C serum” page, dermi.fr with comparative blog post).

H2 — Bias toward “native” sources. ChatGPT exposes a per-brand source counter (6 for Typology and Odacité, 5 for NUXE). A brand site with a detailed PDP and structured specs counts as a valid source.

H3 — Different training data. Base models (GPT-5.x, Mistral Large) possibly have more brand-owned content in their training corpora. When RAG returns few FR media results, the model falls back on training, where well-indexed brand sites appear.

What should a brand do: strategies per target engine

Since each engine has its own signature, a DTC €2-20M cannot optimize everywhere simultaneously. Prioritization depends on your priority engine per your analytics (GA4 Custom Channel Group for AI, visible referrer traffic).

If your priority is ChatGPT (68 percent of global Gen AI traffic)

- Lever #1: solid on-site SEO. ChatGPT falls back on your site if your categorical content is structured. Complete PDP with schema.org Product, AggregateRating, Brand, Offer, hasMerchantReturnPolicy.

- Lever #2: presence in Vogue France listicles. Vogue is a major ChatGPT source on FR beauty. Targeted PR.

- Lever #3: rich categorical blog on your domain (not just mono-product). “Guide to clean beauty vitamin C serum”, honest comparative articles.

If your priority is Perplexity (most traceable SAIV, strong visibility on FR commercial queries)

- Lever #1: get cited in Marie Claire and Beauté Test listicles for your category. A single up-to-date Marie Claire article does 80 percent of the work.

- Lever #2: quarterly monitoring of seasonal listicles (“best serums 2026”, “winter anti-aging”) in those 2 sources.

- Lever #3: targeted beauty PR on avis-beaute.marieclaire.fr and beaute-test.com, not generic press.

If your priority is Gemini (18-20 percent of Gen AI, exposes product specs)

- Lever #1: expose structured product attributes that Gemini picks up. Exact concentration, chemical form, certifications, country of manufacture.

- Lever #2: enriched schema.org Product with

additionalPropertyfor concentration/form,brandwithsameAsto Wikidata/LinkedIn. - Lever #3: product FAQ page formulated as technical questions, not marketing.

If your priority is Claude (niches and long tail, conversational)

- Lever #1: long-tail awareness. Claude cites by broad recognition, not by media listicles.

- Lever #2: partnerships in secondary vertical sources. Slow Cosmétique, Nouvojour, Véganie, Doctissimo for FR beauty.

- Lever #3: educational brand content (glossaries, usage guides) well indexed and accessible.

If your priority is Mistral Le Chat (FR engine, local bias)

- Lever #1: on-site SEO accepted. Mistral willingly cites brand-owned sites. Rich categorical page on your domain with dated comparative content.

- Lever #2: presence in FR aggregators. Nouvojour, Véganie, Doctissimo.

- Lever #3: Yuka and transparent rating. Mistral explicitly cites Yuka as a source. A good Yuka rating becomes an indirect signal.

3 transversal levers that pay on all 5 engines

- Complete schema.org Product. AggregateRating, Brand, Offer, hasMerchantReturnPolicy, shippingDetails, additionalProperty. Read by the crawlers of all 5 engines.

- FR Reddit and forums. r/SkincareAddictionFR, Doctissimo. Indexed by all 5 engines. An authentic thread has higher RAG weight than a brand page on several engines.

- llms.txt + AI-friendly pages. llms.txt file at root pointing to your categorical structured pages. Marginal effect today but free and builds discipline.

What doesn’t or barely works: pure SEO on competitive queries (no AI engine strictly follows Google ranking), massive brand-owned backlinks without fresh content, generic PR unrelated to your specific category.

Scientific limits to know

LLM non-determinism

Even at temperature zero, hosted LLMs are not deterministic. Main cause: GPU inference batch-size varies with load, and floating-point calculations are not batch-invariant. Thinking Machines Lab documented the technical origin. Atil et al. (arXiv 2408.04667) measure up to 15 percent accuracy variance between two identical runs and up to 70 percent best vs worst spread on some benchmarks.

Consequence: any Share of AI Voice number is a wide-variance estimate. The statistical floor is 3 runs; academic studies use 10.

Prompt-Reverse Inconsistency

The Prompt-Reverse Inconsistency (PRIN) paper shows LLMs give contradictory judgments on the same candidate set when prompt order is reversed. Implication: the order in which brands are listed in a benchmark prompt influences model responses.

Cognitive biases on product recommendations

The Bias Beware paper (arXiv 2502.01349) measures that LLMs recommend luxury brands in 88 to 100 percent of cases when the locale indicates a high-income country, and non-luxury brands for low-income countries. A Share of AI Voice measured with US English prompts can be very different from a score measured in French FR.

Inter-engine and inter-version variance

ChatGPT, Perplexity, Gemini, Claude give different answers for the same query, and each model update (Gemini 3, GPT-5.3 etc.) breaks historical baseline. No commercial tool publicly documents its recalibration procedure.

And in France? The special status of AI Overviews

As of April 21 2026, neither AI Overviews nor AI Mode are deployed in France. The legal blocker stems from neighboring rights and commitments Google made after the €250M fine of March 2024. The European rollout of October 8, 2025 covered 36 countries including Germany, Austria, Switzerland, Spain, Italy, Sweden, Poland, Belgium, Netherlands, but not France.

Consequence for French brands: Share of AI Voice measurement focuses on engines accessible in France: ChatGPT Search, Perplexity, Claude, Gemini (app), Copilot, and Mistral Le Chat. AI Overviews should not be in the grid until Google activates it.

This exclusion is a transient advantage for FR brands: US retailers are already hammered by AI Overviews (Adobe measures +393 percent AI traffic to US retailers in Q1 2026), FR retailers have a quarter or two of respite to prepare. Those who use this delay to structure their data and earned media will hold a lasting lead.

How much does Share of AI Voice tracking cost in 2026?

Three budget tiers emerge in April 2026.

Free tier ($0). HubSpot Share of Voice + quarterly DIY. Allows setting a baseline and understanding what’s measured. Not enough for monthly piloting.

SMB tier ($50 to $250/month). Qwairy from €49, Meteoria from €75, Peec AI Starter at €85, Otterly at $29, Semrush AI Toolkit add-on at $99. The sweet spot for a DTC €2-20M that wants monthly piloting with a defensible protocol.

Growth tier ($250 to $1,500/month). Profound Growth at $399, Peec Pro at €205, AthenaHQ at $295 monthly, Semrush One at $199-549. Indicated when the company needs automated multi-category reporting and sectorial benchmarks.

Enterprise tier (> $2,000/month). Profound Enterprise, Ahrefs Brand Radar in Enterprise plans, Similarweb GenAI in upper tiers. Indicated for brands > €50M with dedicated data teams.

Common sense rule: do the DIY protocol once before subscribing. It tells you what you’re measuring, verifies what the commercial tool says, and avoids paying $400 for a figure you don’t know how to interpret.

Conclusion

Share of AI Voice is a real KPI, not a buzzword. But it’s a probabilistic KPI, with wide variance, and measurements that don’t overlap between vendors. Piloting it seriously requires: three measured dimensions (citation rate, brand context, answer coverage) and not a single number, repeated runs (three minimum, ten ideally) and not a one-shot, a serious prompt tree (30 minimum, 50 to 150 for a reference), explicit disclosure of limits (variance, model version, locale), and empirical cross-check on a sample of real responses.

For French brands, AI Overviews exclusion offers a relative quarter or two of lead over US competitors. The best use of that delay is to structure product attributes (full schema.org Product, hasMerchantReturnPolicy, shippingDetails, AggregateRating), work presence on the 2-5 media sources that dominate each category, and set a Share of AI Voice baseline before competitors do.

If you want an initial audit of your AI visibility (schema.org read, detection of absences on AI entities, agentic commerce score), Verity Score’s free GEO audit produces it in 3 minutes. It does not replace a rigorous Share of AI Voice measurement, it precedes it: knowing what your product pages expose to AI crawlers before measuring what engines do with them.

To go further on foundations: AEO 2026, What is GEO, State of AI Commerce 2026.